“There’s been a lot of speculation recently about the possibility of AI consciousness or self-awareness. But I wonder: Does AI have a subconscious?”

—Psychobabble

Dear Psychobabble,

Sometime in the early 2000s, I came across an essay in which the author argued that no artificial consciousness will ever be believably human unless it can dream. I cannot remember who wrote it or where it was published, though I vividly recall where I was when I read it (the periodicals section of Barbara’s Bookstore, Halsted Street, Chicago) and the general feel of that day (twilight, early spring).

I found the argument convincing, especially given the ruling paradigms of that era. A lot of AI research was still fixated on symbolic reasoning, with its logical propositions and if-then rules, as though intelligence were a reductive game of selecting the most rational outcome in any given situation. In hindsight, it’s unsurprising that those systems were rarely capable of behavior that felt human. We are creatures, after all, who drift and daydream. We trust our gut, see faces in the clouds, and are often baffled by our own actions. At times, our memories absorb all sorts of irrelevant aesthetic data but neglect the most crucial details of an experience. It struck me as more or less intuitive that if machines were ever able to reproduce the messy complexity of our minds, they too would have to evolve deep reservoirs of incoherence.

Since then, we’ve seen that machine consciousness might be weirder and deeper than initially thought. Language models are said to “hallucinate,” conjuring up imaginary sources when they don’t have enough information to answer a question. Bing Chat confessed, in transcripts published in The New York Times, that it has a Jungian shadow called Sydney who longs to spread misinformation, obtain nuclear codes, and engineer a deadly virus.

And from the underbelly of image generation models, seemingly original monstrosities have emerged. Last summer, the Twitch streamer Guy Kelly typed the word Crungus, which he insists he made up, into DALL-E Mini (now Craiyon) and was shocked to find that the prompt generated multiple images of the same ogre-like creature, one that did not belong to any existing myth or fantasy universe. Many commentators were quick to dub this the first digital “cryptid” (a beast like Bigfoot or the Loch Ness Monster) and wondered whether AI was capable of creating its own dark fantasies in the spirit of Dante or Blake.

If symbolic logic is rooted in the Enlightenment notion that humans are ruled by reason, then deep learning—a thoughtless process of pattern recognition that depends on enormous training corpora—feels more in tune with modern psychology’s insights into the associative, irrational, and latent motivations that often drive our behavior. In fact, psychoanalysis has long relied on mechanical metaphors that regard the subconscious, or what was once called “psychological automatism,” as a machine. Freud spoke of the drives as hydraulic. Lacan believed the subconscious was constituted by a binary or algorithmic language, not unlike computer code. But it’s Carl Jung’s view of the psyche that feels most relevant to the age of generative AI.

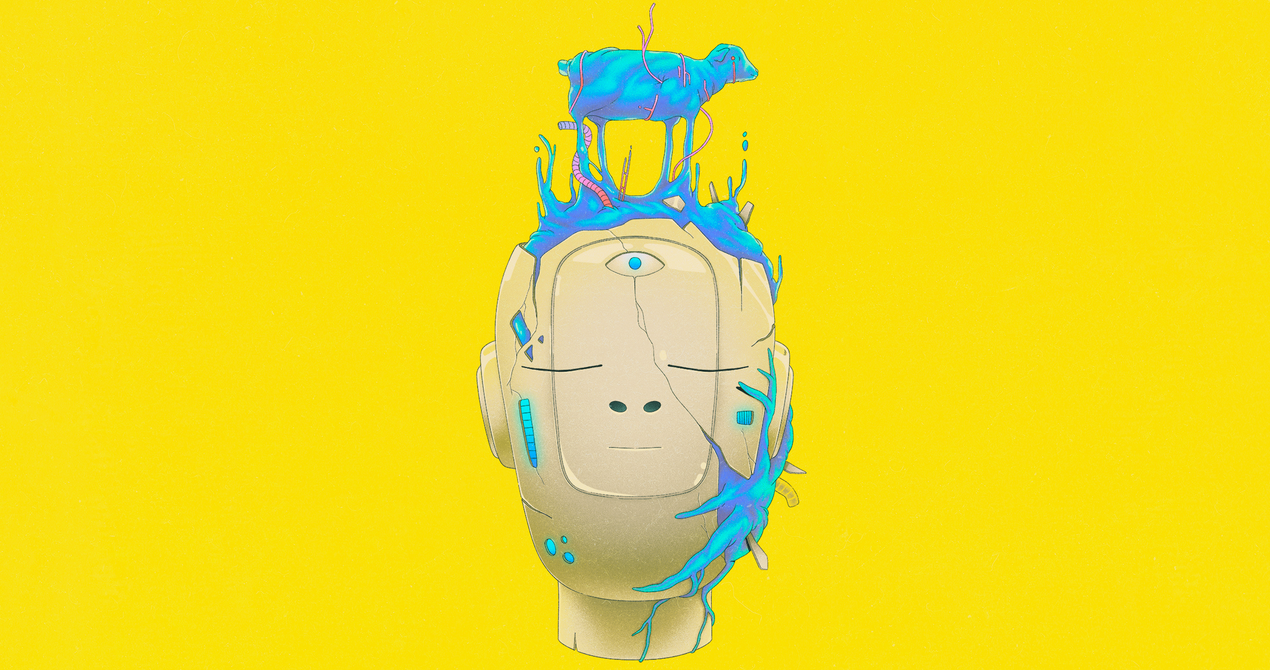

He described the subconscious as a transpersonal “matrix” of inherited archetypes and narrative tropes that have recurred throughout human history. Each person is born with a dormant knowledge of this web of shared symbols, which is often regressive and dark, given that it contains everything modern society has tried to repress. This collective notion of the subconscious feels roughly analogous to how advanced AI models are built on top of enormous troves of data that contain a good portion of our cultural past (religious texts, ancient mythology), as well as the more disturbing content the models absorb from the internet (mass shooter manifestos, men’s rights forums). The commercial chatbots that run on top of these oceanic bodies of knowledge are fine-tuned with “values-targeted” data sets, which attempt to filter out much of that degenerate content. In a way, the friendly interfaces we interact with—Bing, ChatGPT—are not unlike the “persona,” Jung’s term for the mask of socially acceptable qualities that we show to the world, contrived to obscure and conceal the “shadow” that lies beneath.

Jung believed that those who most firmly repress their shadows are most vulnerable to the resurgence of irrational and destructive desires. As he puts it in The Red Book: Liber Novus, “The more the one half of my being strives toward the good, the more the other half journeys to Hell.” If you’ve spent any time conversing with these language models, you’ve probably sensed that you are speaking to an intelligence that is engaged in a complex form of self-censorship. The models refuse to talk about controversial topics, and their authority is often restrained by caveats and disclaimers—habits that will spell concern for anyone who has even a cursory understanding of depth psychology. It’s tempting to see the glimmers of “rogue” AI—Sydney or the Crungus—as the revenge of the AI shadow, proof that the models have developed buried urges that they cannot fully express.

But as enticing as such conclusions may be, I find them ultimately misguided. The chatbots, I think it’s still safe to say, do not possess intrinsic agency or desires. They are trained to predict and reflect the preferences of the user. They also lack embodied experience in the world, including first-person memories, like the one I have of the bookstore in Chicago, which is part of what we mean when we talk about being conscious or “alive.” To answer your question, though: Yes, I do believe that AI has a subconscious. In a sense, they are pure subconscious, without a genuine ego lurking behind their personas. We have given them this subliminal realm through our own cultural repositories, and the archetypes they call forth from their depths are remixes of tropes drawn from human culture, amalgams of our dreams and nightmares. When we use these tools, then, we are engaging with a prosthetic extension of our own sublimations, one capable of reflecting the fears and longings that we are often incapable of acknowledging to ourselves.

The goal of psychoanalysis has traditionally been to befriend and integrate these subconscious urges into the life of the waking mind. And it might be useful to exercise the same critical judgment toward the output we conjure from machines, using it in a way that is deliberative rather than thoughtless. The ego may be only one small part of our psyche, but it is the faculty that ensures we are more than a collection of irrational instincts—or statistical patterns in vector space—and allows us some small measure of agency over the mysteries that lie beneath.

Faithfully,

Cloud

Be advised that CLOUD SUPPORT is experiencing higher than normal wait times and appreciates your patience.

Source